We define Corrective Diffusion Language Models (CDLMs) as diffusion language models that have been post-trained with an absorbing-uniform mixture objective. This post-training enables the model to develop error-aware confidence, allowing it to assign lower confidence to potentially incorrect tokens and thereby support effective confidence-guided correction.

To address the fundamental limitation of standard MDLM training, we propose a correction-oriented post-training principle based on an absorbing-uniform mixture objective. This approach explicitly supervises visible corrupted tokens alongside masked reconstruction, enabling the model to learn error-aware confidence.

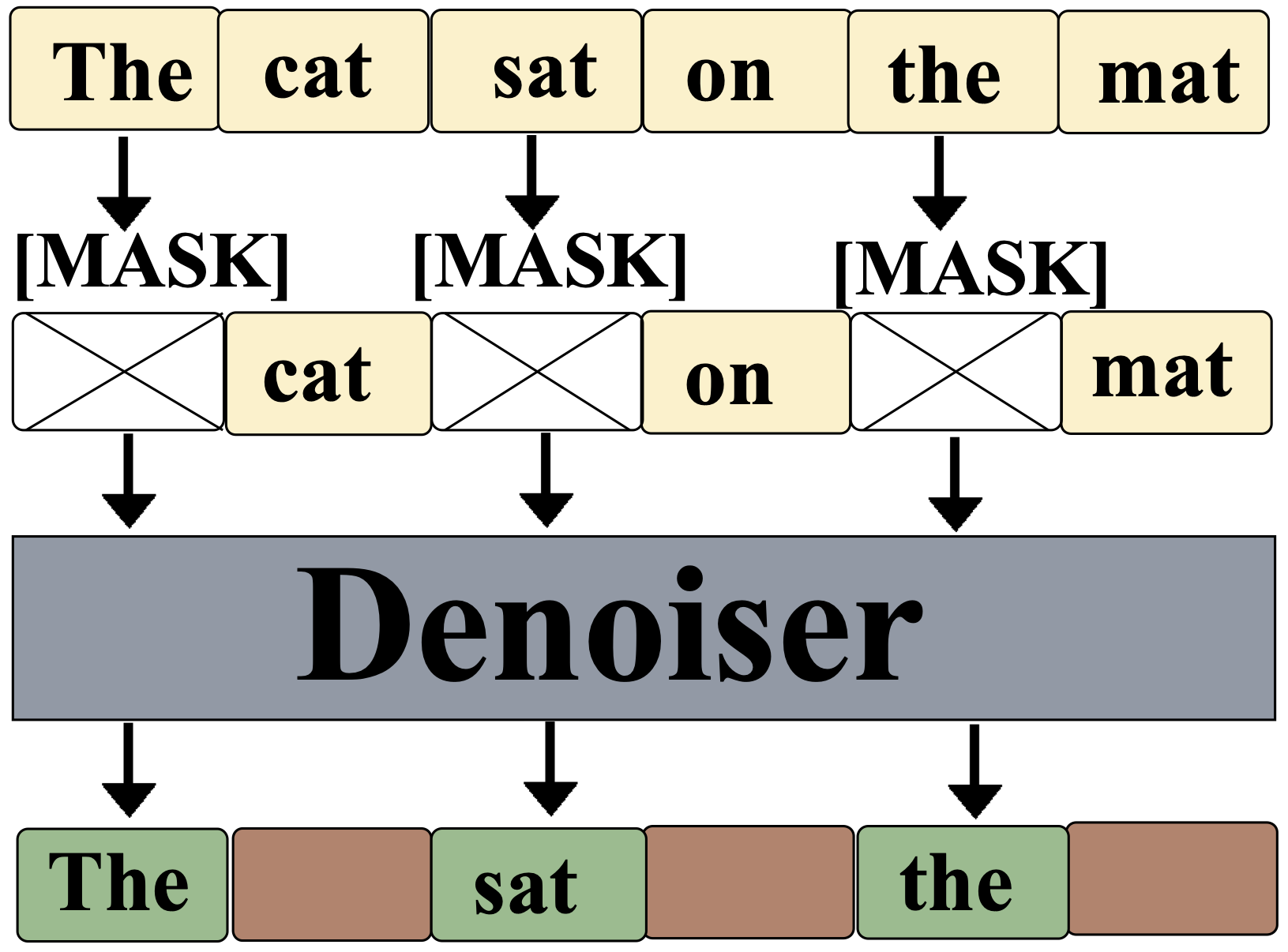

🔄 Two-Stage Mixture Corruption:

- Stage 1 (Absorbing-mask corruption): A fraction of tokens are replaced with

[MASK], providing standard reconstruction supervision.

- Stage 2 (Uniform replacement corruption): Among the remaining visible tokens, some are replaced with randomly sampled incorrect tokens. These corrupted-but-visible positions receive explicit supervision.

The key insight is that uniform replacement introduces explicit noise at visible positions, requiring the model to detect and correct corrupted tokens. The training objective becomes:

ℒ = (1/|𝓜|) Σi∈𝓜 ℓi + λnoise · (1/|𝓝|) Σi∈𝓝 ℓi

Here 𝓜 denotes masked positions (standard reconstruction) and 𝓝 denotes positions corrupted via uniform replacement. The first term preserves denoising capability, while the second term trains the model to recognize and downweight incorrect visible content.